Data Fabric, Data Mesh Distilled

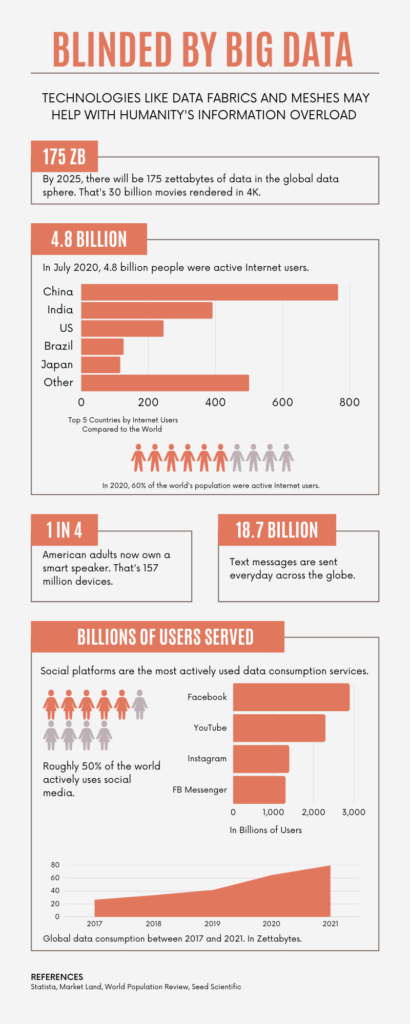

It’s a New Year and a new attitude! A lot has been said about data. As of 2021, 2.5 quintillion bytes of data were generated across the Internet every day and Google processed 20 petabytes which included 3.5 billion search queries daily. Humanity, using the machines we depend on to live, work, and play generate lots and lots of data. All of this data translates into information used to push advertisements, perform credit scoring, find fraud, and more. We generate so much data we have to buy more and more storage and continuously come up with clever ways of tagging, cataloging, and accessing data. The sheer amount of data makes it exceedingly difficult to find the data you need, when you need it. The management consultant, Geoffrey Moore says, “without big data we’re blind and deaf”. Data and information gets generated and captured so fast, that we’re definitely blinded by the sheer volume of the stuff.

“Without big data, you are blind and deaf and in the middle of a freeway.”

-Geoffrey Moore

Every year, companies spend significant resources and invest many hours of manpower to figuring out where to store specific data products and determining who has access to it.

We’re going to start this RIViR Reads year off by talking about two new concepts being considered to solve one of big data’s challenges, data access and control.

The Data Fabric and Data Mesh are two concepts the big data industry are promoting to solve the access and control problem. We’ll start with the Data Fabric.

Data Fabric

Data Fabric

A Data Fabric is an architectural idea. A data fabric comprises a unified set of data services that share compute and storage infrastructure, standardized interfaces, and can be accessed the same way whether or not data is kept in the cloud or on-premises. Data Fabrics support different data storage and database technologies. A Data Fabric can host CSVs and other text files like JSON, NoSQL database, and SQL databases. Data Fabrics work by overlaying data access software on top of dedicated data storage devices and services. Data access software created by companies like IBM and Informatica give users the ability to use SQL and NoSQL standards to access underlying data. Data storage devices and services provide standard access to data as if it were hosted on-premises, in multiple clouds, or a combination of both.

Data Mesh

We typically think of mesh being made of fabric. You can think of Data Mesh the same way. A Data Fabric can be used to implement a Data Mesh. A Data Mesh is a design pattern for making data more accessible and consumable for end users. The Data Mesh design pattern is based upon approaches used by software developers to create modern, distributed software applications. A Data Mesh splits data into different user domains. Data creators can publish and maintain their data as their needs demand. They can also control the data’s governance, who has access to it and for how long. While users create and maintain their data, technologies are used to make data products easily accessible to customers.

Data Fabric technologies are used to provide standardized access to data. Data Mesh technologies are used for organizing the data.

The right combination of a Data Fabric architecture and Data Mesh design pattern provide a standardized method for storing, organizing, and controlling how data is created, consumed, and shared with data product users. Data Fabrics and Data Meshes can solve many organizational challenges with data handling. Like with many technologies the key to making these technologies work is careful planning and a methodical rollout. It looks like we’re not going to slow down how much data we generate every day. Data Fabrics and Data Meshes may be the key to helping us avoid blinding ourselves by the constant influx of information.